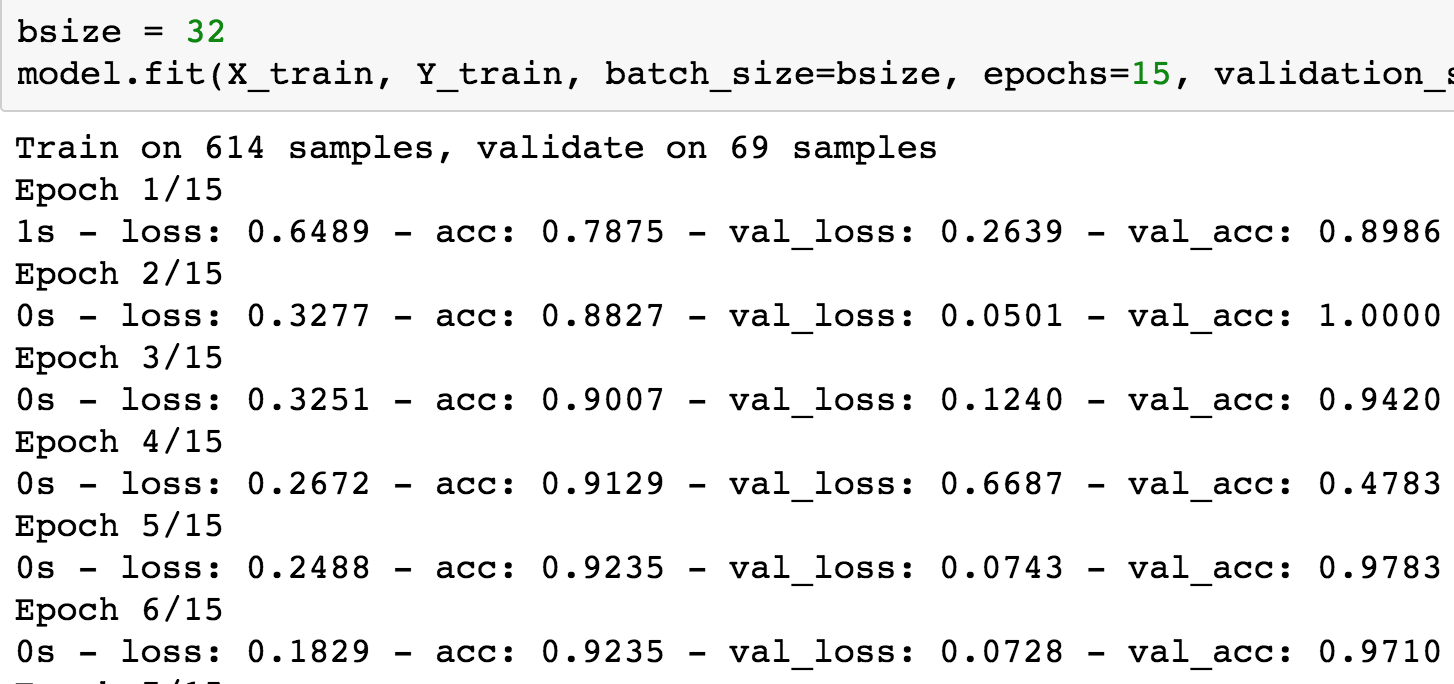

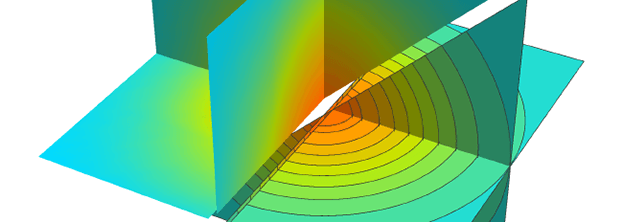

Our multi-velocity autoencoder consists of 3 action autoencoders combined together to access temporal features for different velocities. In this work we propose to learn the velocity of the sequence in parallel to its classification by adaptive temporal interpolation. One of the main challenges in action recognition is related to assigning similar classification to objects at different velocities. The deconvolution layers are extended in time as well and reverse the slow fusion generating temporal features successively (see figure 3). The first convolution filters have size 3 and stride 2 in time domain, the next layer has size 2 and stride 2 and the third layer combines all temporal features. We extend the convolution layers in time and use slow fusion model which slowly combines temporal information in successive layers. F C ( n ) stands for fully connected layers with n nodes and N stands for local response normalization layers. D C ( n, f, s ) stands for deconvolution layers with n deconvolving filters of size f and stride s.

#Mathematica 11.3 recurrent neural network full

Using shorthand notation the full architecture can be written as C ( 96, 11, 3 ) − N − C ( 256, 5, 2 ) − N − C ( 384, 3, 2 ) − N − F C ( 4096 ) − F C ( 4096 ) − D C ( 96, 11, 3 ) − N − D C ( 256, 5, 2 ) − N − D C ( 384, 3, 2 ), where C ( n, f, s ) stands for convolution layers with n filters of size f and stride s. This network is similar to Imagenet but accepts inputs of size 145 × 145 × 9 as an input. We use convolutional filters with weight sharing in the first 6 layers followed by 2 fully connected layers.

Our action autoencoder consists of convolutional autoencoder for learning deep features and reducing the dimensionality of the data. (d) Reconstruction after using 12 layers. (b) Reconstruction after using 4 convolutional layers. 3 Method Figure 2: Results from reconstruction using temporal convolutional autoencoder on a face video. In this paper we follow those guidelines and train from start-to-end a hybrid system composed of autoencoders for unlabeled data and additional loss function for the classification tasks. Very recently superior results have been shown using deep neural nets to combine labels and un-labeled data in the same package.

In the authors pre-trained the system using pseudo labels, while in they embedded the data in a low dimensional space. Providing high quality results when only a small part of the data is labeled is an interesting problem referred to as semi-supervised learning. Training a neural net normally requires a large labeled dataset which is hard to obtain using reasonable resources.